Hallucination-robust agentic workflows

Why your LLMs keep making things up (and how to fix it)

TL;DR

Hallucinations are important and may be inevitable - they're natural byproducts of LLM technology as "lossy compressors"

Error propagation in agentic workflows is the biggest concern - probabilistic errors compound across agents

Context augmentation provides partial solutions - RAG, browsing tools and general context augmentation matters, but only addresses some sources of hallucinations, not faulty reasoning

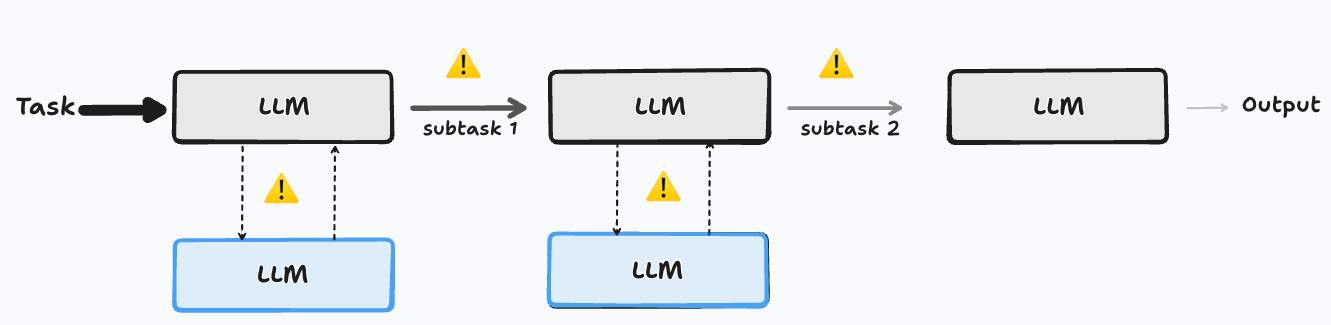

Multi-agent reviewer workflows are highly effective - augmenting your workflows with reviewer LLMs that validate the output is highly effective to improve the overall robustness of the system

Immediate action is needed - implement mitigation strategies now before hallucinations become bottlenecks

Prelude

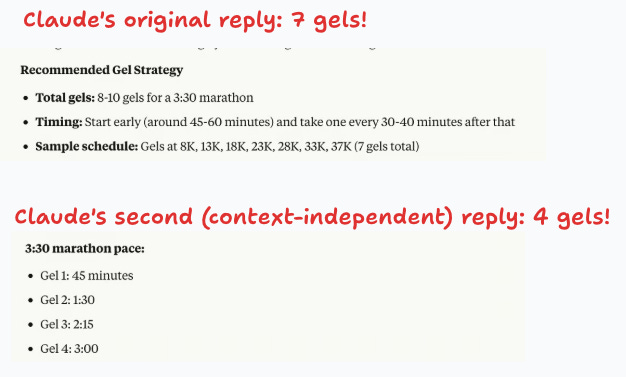

Preparing for this year’s Mexico City marathon, I asked Claude to recommend a gel consumption strategy for the race day. The first answer made sense to me: Claude recommended having 7 gels during the race. The night before the marathon I couldn’t find the answer, so I asked again, but this time I got a drastically different answer! This time Claude recommended taking only 4 gels during the race.1

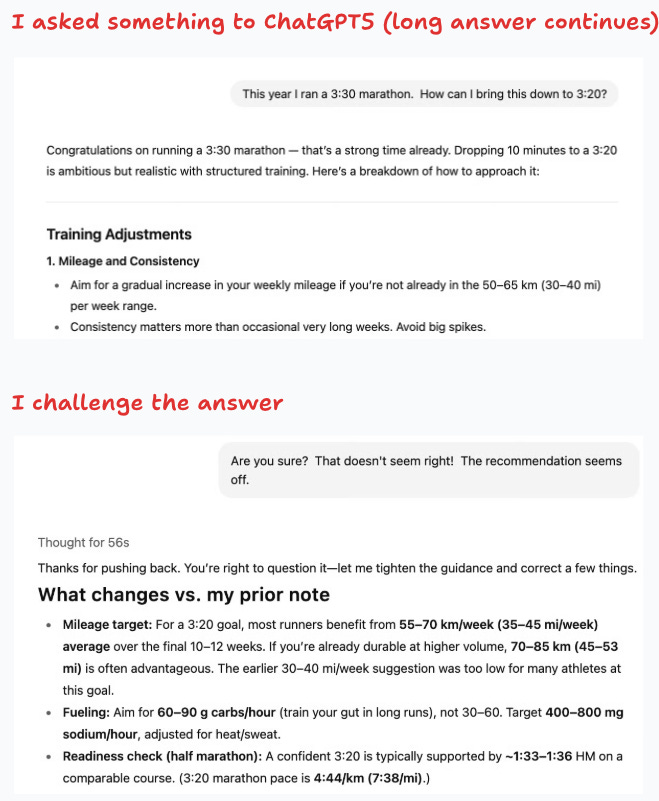

This lack of robustness in the answers can also be seen by challenging an answer with something like “are you sure? that doesn’t seem right” which seems to trigger an internal struggle for the LLM.

I asked Claude about what is behind this and it gave a reasonable answer:

Sycophancy and agreeableness

Context can change the answer

Failing to detect that a human is testing the LLM

Hallucinations’ uneven effect on different access tiers

Hallucinations may deter the massive and fast adoption of GenAI across all tiers. My interaction with Claude is an example of the impact on the general tier, and as such requires specific training to address within a company.2

For the general access tier — using the chat interface — specific training is required to mitigate the impact of hallucinations.

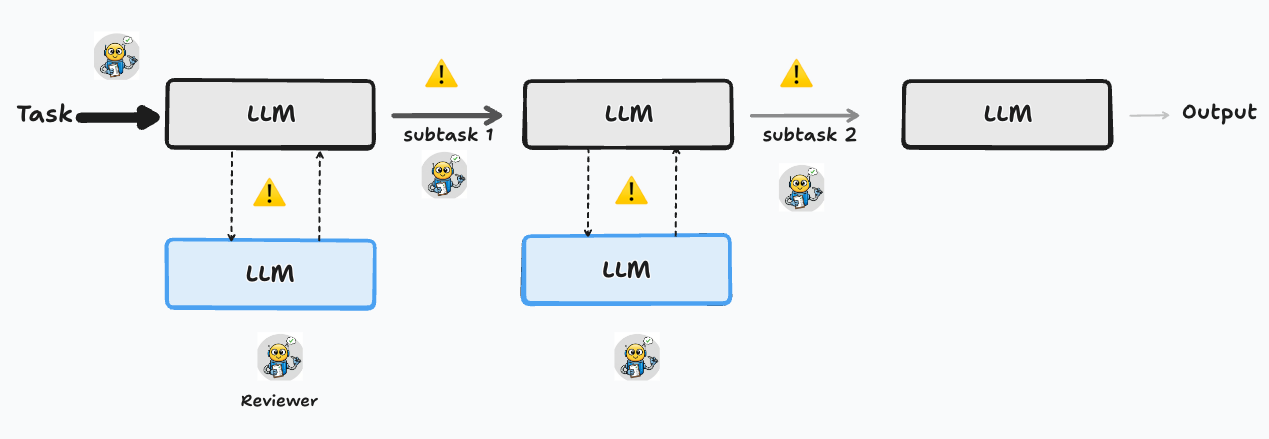

However, since agentic workflows have the potential to become the workforce for an AI-driven revolution, most of the concern should be placed on this tier.3 The reason is that, because of their probabilistic nature, error rates can easily propagate and compound, quickly deteriorating the quality of the final output.

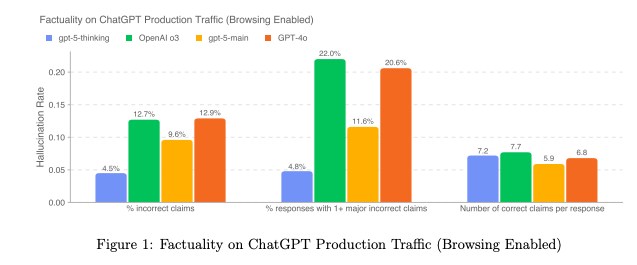

Mainly for this reason, major AI labs continue to put considerable effort into reducing hallucinations. For instance, in the GPT-5 System Card OpenAI states the importance of reducing hallucination rates, especially since “many API queries do not use browsing tools”. Google and Anthropic also report factuality metrics in their cards (Gemini 2.5 and Claude 4).

Typology of errors in LLMs

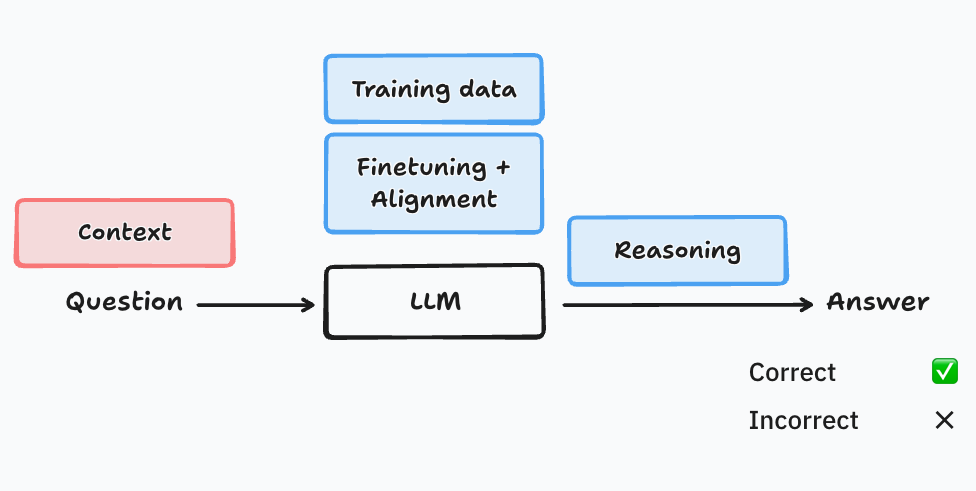

Large Language Models (LLMs) take inputs from us (instruction, question, rants, etc.) and reply by predicting the next word.4 For any given “question” and “answer”, we can in principle evaluate the accuracy or quality of the answer.5

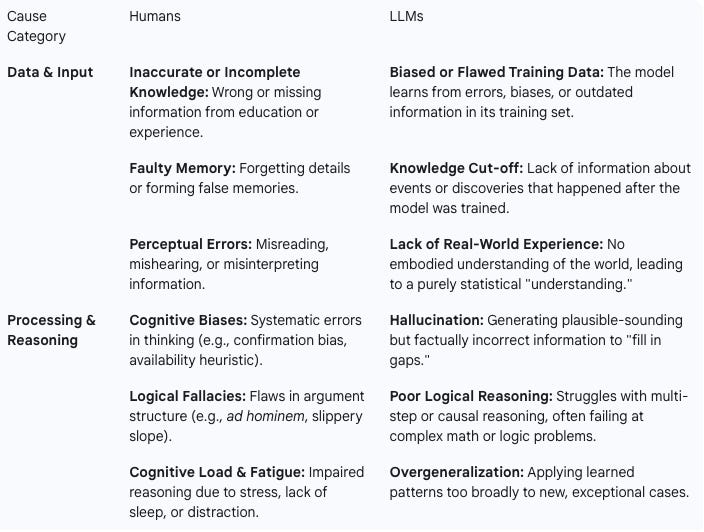

At a high level, an LLM can fail on the input and data sides, and on the processing and reasoning sides. On the data and input sides, questions may be ill-posed or unclear, the answer may depend on the context, or the training data may be biased, incomplete or outdated. On the processing side, LLMs may be finetuned or aligned for sycophancy or agreeableness — as opposed to truthfulness —, may hallucinate and make up an answer that makes perfect sense from a next-word prediction point of view, may engage in faulty reasoning, and may overgeneralize. The next table shows a comparison of why humans and LLMs may respond with errors.

Understanding hallucinations

Hallucinations can be defined as “the phenomenon in which the generated content appears to be nonsensical or unfaithful to the provided source content.”6 Not denying the intuitive appeal of this definition, it’s nonetheless hard to implement in practice with current models where massive datasets are created automatically.

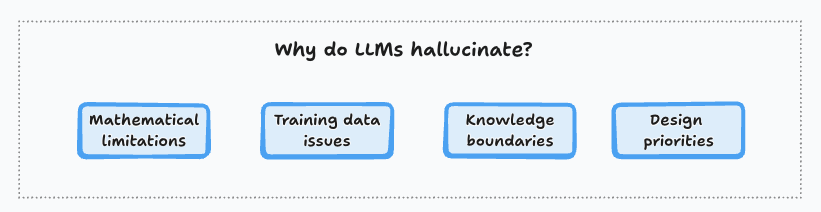

At a deep level, hallucinations are natural byproducts of the technology behind LLMs. Models can be seen as “lossy compressors” of the training data, making it inevitable that at least some fraction of the facts will be lost in the training stage.7 Finetuning and alignment may ameliorate this problem by incentivizing the LLM to say “it doesn’t know”.8

An important, and relatively well understood, cause for hallucinations can be found in training data issues and knowledge boundaries. Take “long-tail” or specialized knowledge that, by definition, has relatively low coverage in the training data by design so an LLM may not be able to establish and memorize the underlying facts. A similar problem arises when multiple, contradictory facts appear in the training data (i.e. the whole internet!), or any societal biases that contradict “established facts”.

One important limitation in our understanding of hallucinations is the lack of control over the training data with large models. For instance, it may be desirable to change different aspects of the training data, while leaving everything else constant, but this is hard to do with large datasets, and with LLMs scaling laws matter a lot.9

Context augmentation: mitigating the extent of hallucinations

What can you do about hallucinations? The most common solution has been to use Retrieval Augmented Generation (RAG). But more generally, what you need is context augmentation, that can be achieved by providing the necessary tools and resources to the LLMs.

Embed context augmentation into your agentic workflows by providing access to tools and relevant resources to the LLMs.

Naturally, this only helps with a fraction of the problem, since faulty reasoning may be the source of hallucinations in your workflow. More complex agentic workflows can be used to mitigate the different sources, for instance, by including reviewers in each stage where an action was taken (humans or LLMs). Commonly, these reviewers come from better (larger) models, or from models from a different AI lab.

What’s next

If you're building with LLMs, start implementing these hallucination mitigation strategies today:

For immediate impact: Add context augmentation to your current workflows through RAG or tool access. Even simple fact-checking steps can dramatically improve reliability.

For production systems: Design multi-agent review processes where different models verify critical outputs.

For your organization: Train your teams to recognize hallucination patterns and implement the "challenge the answer" approach I experienced with Claude. Question confident-sounding but suspicious responses.

The AI revolution depends on reliable agents. Start building robustness into your workflows now, before hallucinations become your bottleneck.

Another hillarious example can be found in this piece.

See for instance the documentation that Anthropic has created to reduce hallucinations.

Agentic workflows have several important advantages, including tool use, autonomy, memory and context augmentation. Another potential impact is in dealing with edge cases (e.g. MIT’s State of AI in Business).

Prediction is at the token level, but for the purpose of this post we can think of LLMs predicting words.

To be sure, there are many reasons why this accuracy may be hard to measure. At the first level, there are many questions where there’s no correct answer (e.g. “how many angels can dance on the head of a pin?”). There are also cases where there are more than one correct answer (“how can one get to the top of Mount Everest?“). Finally, semantic equivalence — sentences or phrases that look different but have the same meaning — also make evaluations difficult.

I take this definition from Huang, et.al. (2024) survey on the topic.

This research by Anthropic finds specific default refusal circuits (“interpretable building blocks”) that fire when the model declines to provide an answer. Some hallucinations arise when these circuits fail to fire.

This is just to say that having controlled experiments is easier done in small models, but it’s not obvious that any learnings will translate to the larger counterparts used in most business applications.